For five generations, Apple Silicon scaled the same way. Take a die. Add cores. Shrink the node. The formula worked brilliantly — so brilliantly that each M-series release started to feel less like an engineering event and more like a product announcement with predetermined results. Faster. More efficient. Better battery life. Repeat.

The M5 Pro and M5 Max broke that formula.

When Apple announced the new MacBook Pro with M5 Pro and M5 Max in early March 2026, the headline numbers were predictably impressive: up to 30 percent faster CPU performance, over 4x the AI compute of the previous generation, 50 percent higher graphics throughput in certain workflows. But the numbers aren't the story. The story is howApple achieved them — and what it signals about where Apple Silicon is going from here.

What Is Apple Fusion Architecture — and Why Does It Matter?

Apple has used multi-die packaging before. The M1 Ultra, M2 Ultra, and M3 Ultra all bonded two Max dies together using what Apple called UltraFusion — but that technology was reserved for the Ultra tier, the highest-end configurations destined for Mac Studio and Mac Pro. The Pro and Max chips, which power the MacBook Pro and sit at the core of Apple's most commercially important professional products, always used a single die.

That changed with the M5 generation. The M5 Pro and M5 Max are built using what Apple is calling Fusion Architecture — two third-generation 3-nanometer dies bonded into a single SoC using advanced packaging with high-bandwidth, low-latency interconnects. macOS treats the result as a single unified chip, with the CPU, GPU, Neural Engine, unified memory controller, Media Engine, and Thunderbolt 5 capabilities distributed across both dies.

The significance of this shift is easy to understate. Apple explicitly skipped an M4 Ultra entirely — something it had never done before — and that absence wasn't an oversight. It was preparation. Rather than continuing to scale a single die upward, Apple spent the M4 generation rearchitecting how the Pro and Max chips work at a fundamental level. The Apple M5 chip's Fusion Architecture debuts not at the Ultra tier but one rung below it, which means every MacBook Pro M5 buyer gets the benefit.

Architosh, which covers Apple Silicon closely for design and engineering audiences, called it "the biggest chip design update since the original M-series debuted." That framing holds up when you look at what the architecture unlocks.

M5 Pro vs M4 Pro: What the Fusion Architecture Actually Changes

The most visible benefit is in CPU design. The M5 Pro and M5 Max both feature an 18-core CPU with six "super cores" and 12 new performance cores — up from the 14-core M4 Pro and 16-core M4 Max. But the raw core count increase isn't the interesting part.

Previous M-series chips used a three-tier core structure: high-performance "performance" cores for demanding single-threaded work, and low-power "efficiency" cores for background tasks. The M5 Pro and M5 Max eliminate efficiency cores entirely. In their place: a new class of performance cores optimized for sustained multithreaded work at the pro level, and super cores — Apple's rebranding of what used to be called performance cores, but representing a meaningful leap in single-threaded capability through improved front-end bandwidth, a new cache hierarchy, and enhanced branch prediction.

There are now three CPU core types across the M5 family: Efficiency (still present in the base M5), Performance, and Super. The base M5 has six Efficiency cores and four Super cores. The Pro and Max variants have no Efficiency cores at all — just Performance and Super. This is a deliberate design choice, not a simplification. MacBook Pro workloads don't benefit from efficiency-optimized background cores the way consumer devices do, and the Fusion Architecture gave Apple room to optimize the die allocation accordingly.

The result is up to 30 percent faster multithreaded performance versus M4 Pro, and 2.5 times the multithreaded throughput of M1 Pro and M1 Max.

The M5 GPU Redesign Is Really About On-Device AI

The GPU numbers are where things get genuinely surprising. On paper, the M5 Pro supports up to 20 GPU cores and the M5 Max scales to 40 — identical maximum counts to the M4 generation. Same cores, meaningfully different chip. The architecture is the difference.

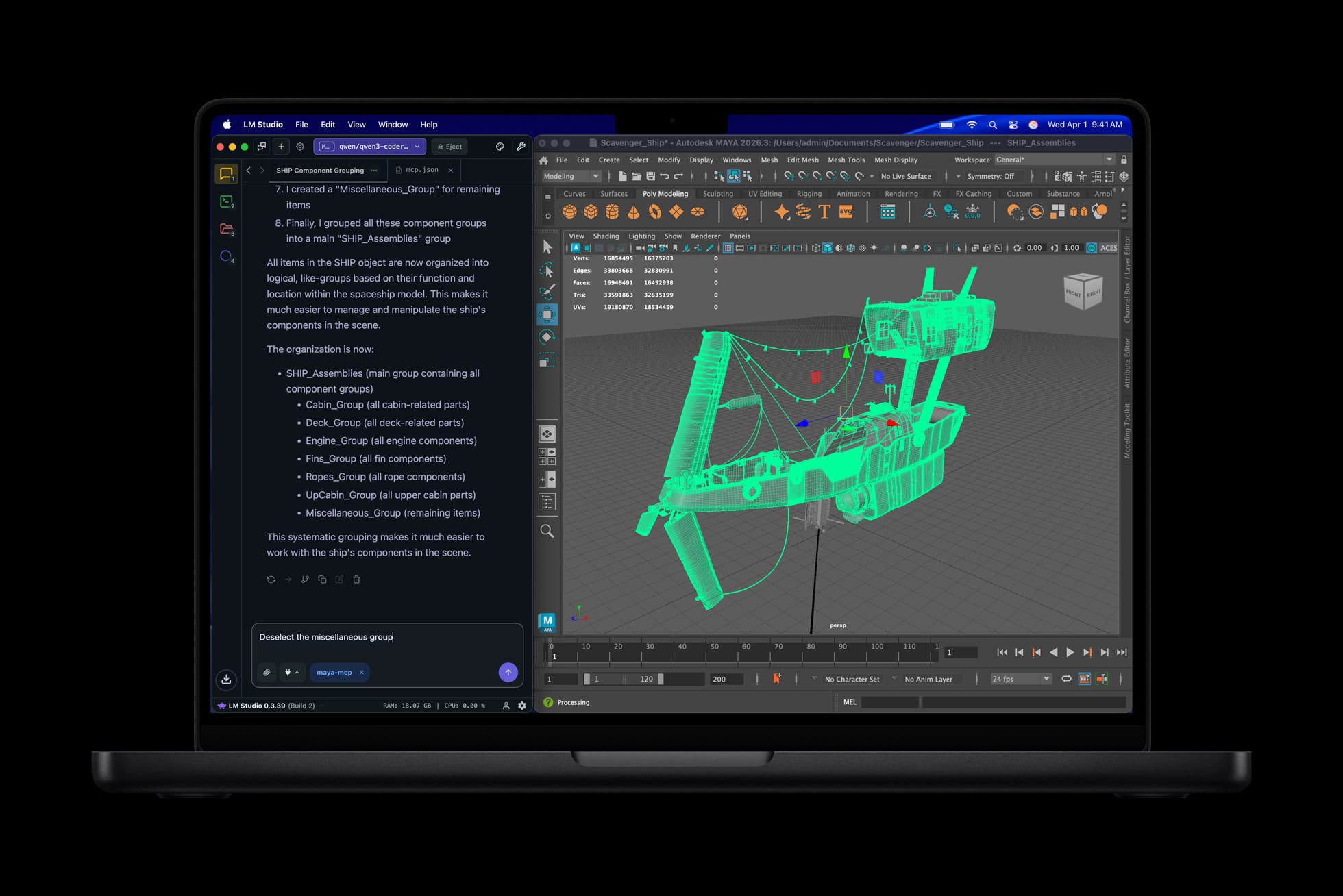

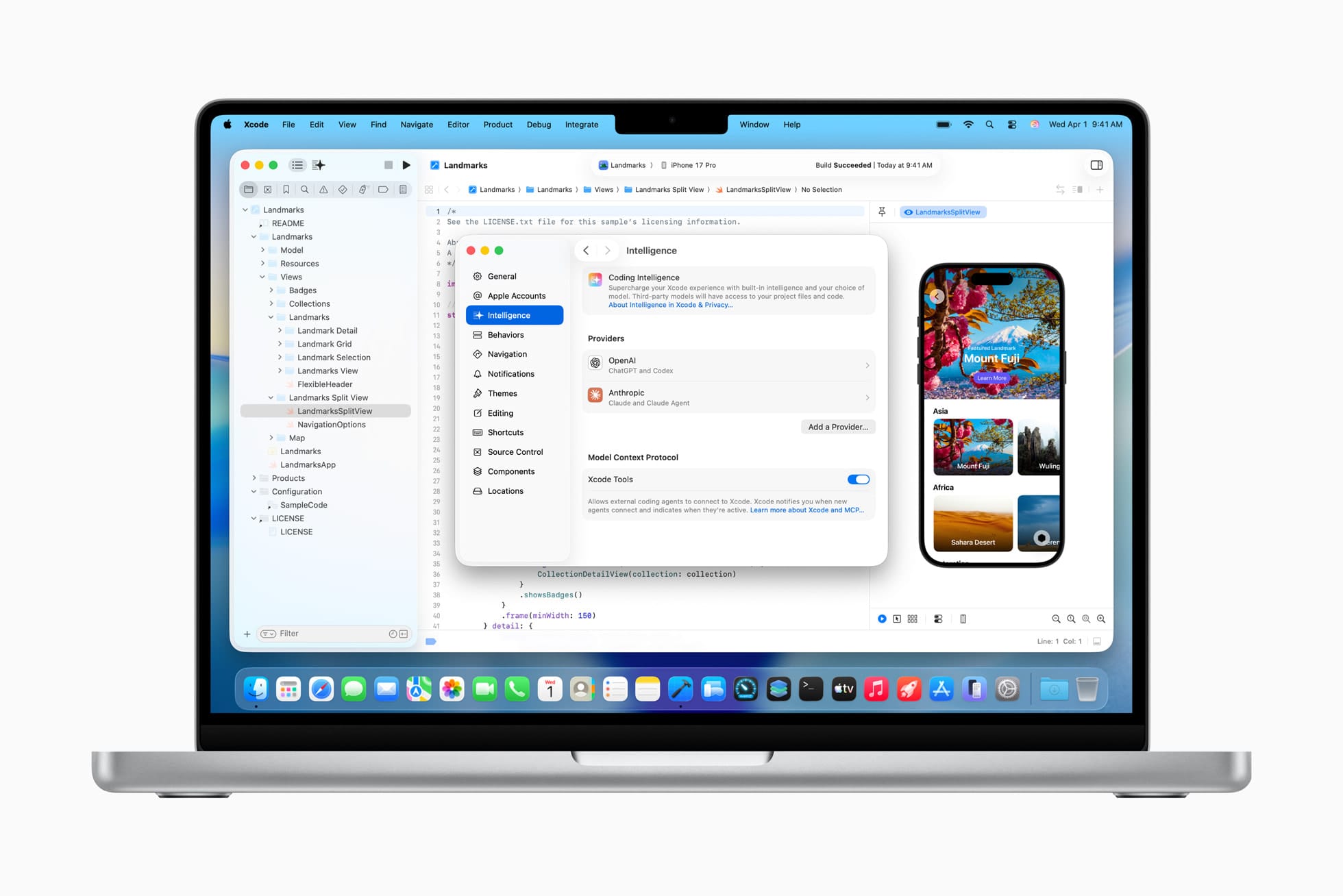

Apple introduced a critical change in the base M5 chip last October: a dedicated Neural Accelerator integrated into every single GPU core. That architecture scales directly into the M5 Pro and M5 Max with the Fusion generation, and the implications are substantial. The M5 Pro and M5 Max deliver over 4x the peak GPU compute for AI tasks compared to their M4 counterparts — and over 6x compared to M1 Pro and M1 Max. These aren't incremental gains made possible by a new process node. They're structural gains from a fundamental redesign of how AI workloads interact with the GPU.

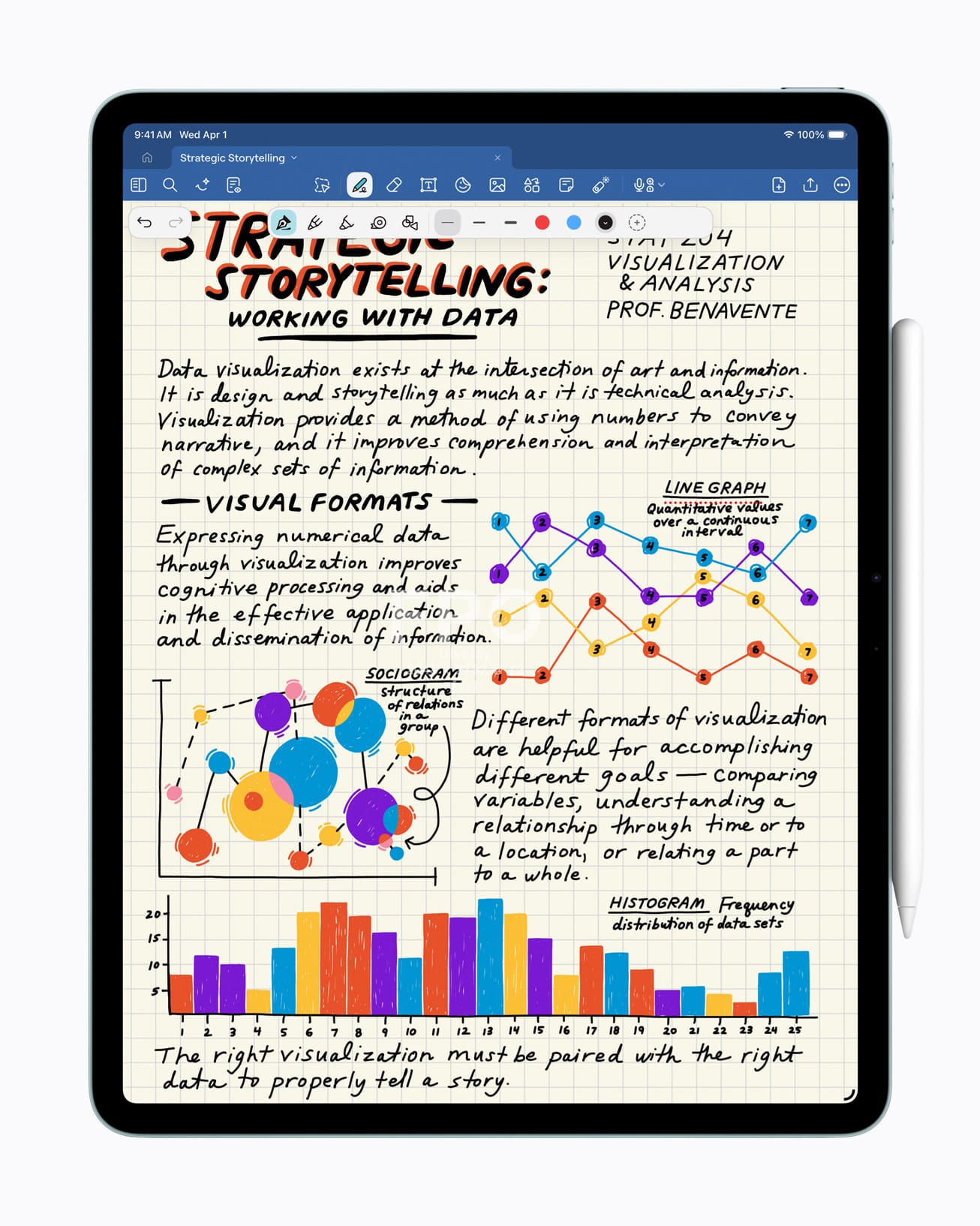

For developers and AI researchers running local models, the practical translation is direct: Apple claims 4x faster LLM prompt processing versus M4 Pro and M4 Max, and 8x faster AI image generation compared to M1-generation chips. These workloads — inference, diffusion models, on-device training — are no longer edge cases. They're core to how creative professionals, engineers, and researchers work. The M5 generation treats them as first-class workloads, not afterthoughts.

On traditional graphics performance, the gains are also real: up to 20 percent higher than M4 Pro and M4 Max for general workloads, up to 35 percent for ray-tracing applications via Apple's third-generation ray-tracing engine, and hardware-accelerated mesh shading available for the first time in this tier.

M5 Max Unified Memory Bandwidth: The Number That Actually Matters

One of the quieter advantages of the Fusion Architecture is what it does for memory bandwidth. The M5 Pro supports up to 64GB of unified memory — up from 48GB on M4 Pro — with bandwidth reaching 307GB/s. The M5 Max retains its 128GB ceiling but raises bandwidth to 614GB/s.

These aren't numbers to gloss over. Memory bandwidth is the actual bottleneck for large AI model inference and high-resolution video workflows. The M4 Pro's 273GB/s ceiling was already class-leading among laptop-class chips; the M5 Pro's 307GB/s pushes it further. The M5 Max's 614GB/s is approaching territory that, until very recently, required discrete GPU solutions with dedicated VRAM — the kind of hardware that occupies desktop towers, not 16-inch notebooks.

The unified memory architecture — where CPU, GPU, and Neural Engine all share the same pool rather than copying data between discrete memory subsystems — remains Apple's most underappreciated structural advantage. The Fusion Architecture scales it without compromising it. Two dies, one memory pool, no transfer overhead. That's the engineering achievement here as much as any individual performance number.

Why the Apple M5 Chip Generation Is Different

It's tempting to read the M5 announcement as Apple catching up to a competitive market — Qualcomm's Snapdragon X Elite made real inroads in the Windows laptop space, and Nvidia's dominance in AI compute hardware created a narrative that Apple Silicon was falling behind for serious AI work. There's some truth to that framing, but it misses the more important development.

Apple didn't iterate its way to the M5 Pro and M5 Max. It paused, redesigned the architecture, and re-emerged with a chip that scales in a fundamentally different way than its predecessors. The Fusion Architecture is not a ceiling-raising maneuver — it's a platform decision. The same multi-die approach that debuts in the M5 Pro and M5 Max will almost certainly define how Apple scales to future Ultra-tier chips, desktop silicon, and eventually whatever comes next in the Apple Intelligence compute roadmap.

For the MacBook Pro buyer today, the performance case is clear. For the Apple Silicon watcher, the more interesting question is what Apple builds on top of this architecture over the next two or three generations. Fusion Architecture at the Pro/Max tier means the company now has a scalable packaging strategy that doesn't require ever-larger single dies. It can mix and match. It can optimize each die for different workload profiles. It can scale core counts further without the yield and thermal penalties that constrain monolithic chip design.

Apple said M5 Pro and M5 Max are "engineered from the ground up for AI." That's marketing language, but for once it isn't wrong. The Neural Accelerators in every GPU core, the elimination of efficiency cores in favor of performance-focused architecture, the doubled memory bandwidth — these aren't features assembled for a press release. They're the visible outputs of an architectural decision Apple made somewhere around the M4 generation, when it decided the single-die formula had gone as far as it could go.

What Apple shipped this week is the result of that decision. It's the most technically interesting Apple Silicon generation since M1, and it's probably not close.

Discussion